a probably less known feature of the oracle database is the database filesystem (dbfs). in short dbfs provides a filesystem interface on top of database tables accessible through standard operating system commands. storing files inside the database becomes as easy as creating files on the operating system level. there is no need to use a programming language to store blobs/clobs inside the database.

for demonstrating dbfs this post will show how to create a subversion repository which actually resides in the oracle database. this is not intended for any production use and shall only show that is basically works.

because I am on linux and want to mount the database filesystem on the operating system I will need to install the kernel-devel and fuse packages and give execute privileges to the fusermount command:

su- yum install kernel-devel fuse fuse-libs chmod +x /bin/fusermount

next I’ll create the configuration file for fuse:

touch /etc/fuse.conf echo "user_allow_other" > /etc/fuse.conf

the mountpoint for holding my database filesystem and subversion repository will be /oradata/DB112/svn owned by the database owner:

su - oracle mkdir /oradata/DB112/svn

as all the files will reside inside the oracle database I will create a separate user:

su - oracle sqlplus / as sysdba CREATE USER svn IDENTIFIED by "svn" DEFAULT TABLESPACE USERS TEMPORARY TABLESPACE TEMP; GRANT CREATE SESSION TO svn; GRANT dbfs_role TO svn; GRANT RESOURCE TO svn;

the script that creates the dbfs “MYSVNFS” which will be stored in the “USERS” tablespace is:

connect svn/svn @$ORACLE_HOME/rdbms/admin/dbfs_create_filesystem.sql USERS MYSVNFS

the output should be similar to this:

No errors.

--------

CREATE STORE:

begin dbms_dbfs_sfs.createFilesystem(store_name => 'FS_MYSVNFS', tbl_name =>

'T_MYSVNFS', tbl_tbs => 'USERS', lob_tbs => 'USERS', do_partition => false,

partition_key => 1, do_compress => false, compression => '', do_dedup => false,

do_encrypt => false); end;

--------

REGISTER STORE:

begin dbms_dbfs_content.registerStore(store_name=> 'FS_MYSVNFS', provider_name

=> 'sample1', provider_package => 'dbms_dbfs_sfs'); end;

--------

MOUNT STORE:

begin dbms_dbfs_content.mountStore(store_name=>'FS_MYSVNFS',

store_mount=>'MYSVNFS'); end;

--------

CHMOD STORE:

declare m integer; begin m := dbms_fuse.fs_chmod('/MYSVNFS', 16895); end;

No errors.

the client program used to mount the filesystem is called dbfs_client and is located under $ORACLE_HOME/bin. the proper way in regards to security would be to use an oracle wallet for storing the password but as this is only a test I will do it the easy way ( if someone is interested on how to setup the wallet way, just let me know ):

echo "svn" > /home/oracle/passwordfile nohup dbfs_client svn@DB112 /oradata/DB112/svn < /home/oracle/passwordfile &

if everything worked the new mount is now visible with the os commands:

df -h Filesystem Size Used Avail Use% Mounted on /dev/hdc1 28G 11G 15G 43% / tmpfs 741M 456M 286M 62% /dev/shm dbfs-svn@DB112:/ 1.1G 160K 1.1G 1% /oradata/DB112/svn ## ls -la /oradata/DB112/svn/MYSVNFS/ total 0 drwxrwxrwx 3 root root 0 May 4 01:10 . drwxr-xr-x 3 root root 0 May 4 01:15 .. drwxr-xr-x 7 root root 0 May 4 01:10 .sfs

now I am ready to create the subversion repository:

su - oracle cd /oradata/DB112/svn/MYSVNFS/ svnadmin create MYREPO

for being able to access the repository later on from another user I’ll add the user to the subversion password file:

cat MYREPO/conf/passwd [users] svn = svn

… and uncomment the following line in the svnserve.conf file:

password-db = passwd

… and start the subversion deamon:

svnserve -d -r /oradata/DB112/svn/MYSVNFS

to simulate real-life subversion usage I will create a new os user and do some work on the subversion repository ( I could have done this from another workstation, too ):

su - useradd svn passwd svn su - svn

the first thing I need to do is to checkout the repository:

svn co svn://localhost/MYREPO/ cd MYREPO ls -la

lets copy some files ( text and binary ) to populate the repository ( don’t care if not all files are copied because of permissions ):

cp /etc/*.conf . cp /bin/cpio . ls -la

as I now have some files I’ll add them to the repository and commit ( commit means pushing to the subversion server here ):

svn add * svn commit -m "blabla" *.conf --username=svn svn commit -m "blabla" cpio

done. all files committed to the subversion server and stored inside the oracle database. don’t believe it ?

su - oracle sqlplus / as sysdba set lines 164 col object_name for a20 col object_type for a15 SELECT object_name,object_type FROM all_objects WHERE owner = 'SVN'; ## OBJECT_NAME OBJECT_TYPE -------------------- --------------- T_MYSVNFS TABLE IG_SFS$_FST_19 INDEX IP_SFS$_FST_19 INDEX IPG_SFS$_FST_19 INDEX SFS$_FSTP_19 TABLE SYS_C005052 INDEX ## col item for a30 col pathname for a40 select item ,PATHNAME from svn.T_MYSVNFS; ## ITEM PATHNAME ------------------------------ ---------------------------------------- MYREPO /MYREPO locks /MYREPO/locks db.lock /MYREPO/locks/db.lock db-logs.lock /MYREPO/locks/db-logs.lock hooks /MYREPO/hooks start-commit.tmpl /MYREPO/hooks/start-commit.tmpl pre-commit.tmpl /MYREPO/hooks/pre-commit.tmpl post-revprop-change.tmpl /MYREPO/hooks/post-revprop-change.tmpl conf /MYREPO/conf authz /MYREPO/conf/authz db /MYREPO/db fs-type /MYREPO/db/fs-type revs /MYREPO/db/revs 0 /MYREPO/db/revs/0 revprops /MYREPO/db/revprops 0 /MYREPO/db/revprops/0 transactions /MYREPO/db/transactions txn-protorevs /MYREPO/db/txn-protorevs write-lock /MYREPO/db/write-lock uuid /MYREPO/db/uuid 0 /MYREPO/db/revs/0/0 0 /MYREPO/db/revprops/0/0 fsfs.conf /MYREPO/db/fsfs.conf rep-cache.db /MYREPO/db/rep-cache.db min-unpacked-rev /MYREPO/db/min-unpacked-rev txn-current-lock /MYREPO/db/txn-current-lock format /MYREPO/db/format format /MYREPO/format passwd /MYREPO/conf/passwd svnserve.conf /MYREPO/conf/svnserve.conf pre-revprop-change.tmpl /MYREPO/hooks/pre-revprop-change.tmpl pre-lock.tmpl /MYREPO/hooks/pre-lock.tmpl pre-unlock.tmpl /MYREPO/hooks/pre-unlock.tmpl ROOT / .sfs /.sfs attributes /.sfs/attributes tools /.sfs/tools snapshots /.sfs/snapshots RECYCLE /.sfs/RECYCLE content /.sfs/content post-commit.tmpl /MYREPO/hooks/post-commit.tmpl post-lock.tmpl /MYREPO/hooks/post-lock.tmpl post-unlock.tmpl /MYREPO/hooks/post-unlock.tmpl README.txt /MYREPO/README.txt 1 /MYREPO/db/revs/0/1 txn-current /MYREPO/db/txn-current 2 /MYREPO/db/revs/0/2 1 /MYREPO/db/revprops/0/1 current /MYREPO/db/current 2 /MYREPO/db/revprops/0/2

that’s the subversion repository stored in the oracle database.

remember this is only for educational purposes, but imagine what you could do with it:

- back up the repository with rman

- use rman to duplicate the database for testing purposes

- setup rac one node, integrate the subversion daemon with the oracle grid infrastructure and make the repository high available

- create multiple dbfs and multiple svn repositories in one oracle database

- enable deduplication for the repository files ( see * )

- enable compression for the repository files ( see ** )

oracle uses secure files to store the files located in the dbfs. this means you may use all the features secure files provide. check the examples for an overview.

*

alter table svn.T_MYSVNFS modify lob(FILEDATA) (deduplicate);

**

alter table svn.T_MYSVNFS modify lob(FILEDATA) (compress high);

as said above, this is only educational but i’d be happy to start a discussion about the pros and cons ( beside the licensing costs, of course ) …. what would be a good use case to use dbfs for ?

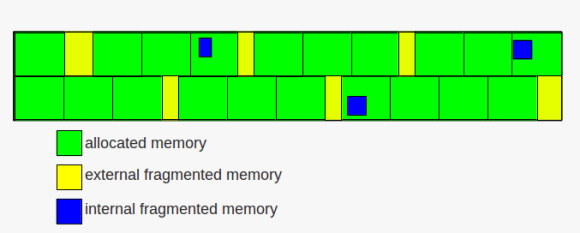

memory fragmentation

memory fragmentation